Overview

The Space Persistency is made of two components, A space data source & a space synchronization endpoint. These components provide advanced persistency capabilities for the space architecture to interact with a persistency layer.

The two components mentioned above are in charge of the following activities:

- The Space data source component handles Pre-Loading data from the persistency layer and lazy load data from the persistency (available when the space is running in LRU mode).

- The Space synchronization endpoint component handles changes done within the space delegation to the persistency layer.

XAPs Space Persistency provides the SpaceDataSource and SpaceSynchronizationEndpoint classes which can be extended and then used to load data and store data into an existing data source. Data is loaded from the data source during space initialization (SpaceDataSource), and from then onwards the application works with the space directly.

Meanwhile, the space persisting the changes made in the space via a SpaceSynchronizationEndpoint implementation.

Persistency can be configured to run in Synchronous or Asynchronous mode:

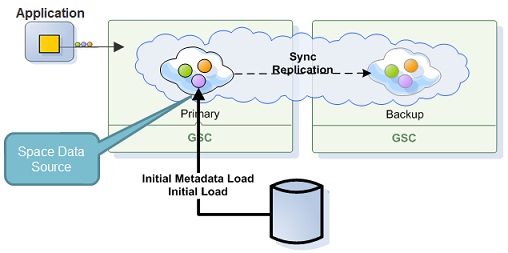

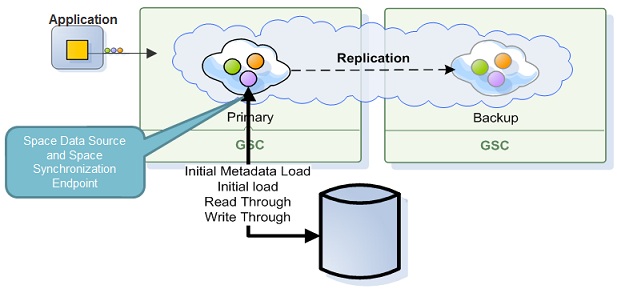

- Synchronous Mode - see Direct Persistency

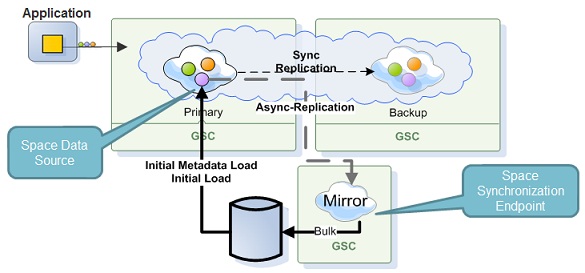

- Asynchronous Mode - see Asynchronous Persistency with the Mirror

The difference between the Synchronous or Asynchronous persistency mode concerns how data is persisted back to the database. The Synchronous mode data is persisted immediately once the operation is conducted where the client application wait for the SpaceDataSource/SpaceSynchronizationEndpoint to confirm the write. With the Asynchronous mode (mirror Service), data is persisted in a reliable asynchronous manner using the mirror service as a write behind activity. This mode provides maximum performance.

If you’re migrating from a GigaSpaces version prior to 9.7 please see the Migrating From External Data Source API page.

Space Persistency API

The Space Persistency API contains two abstract classes one should extend in order to customize the space persistency functionality. The ability to customize the space persistency functionality allows GigaSpaces to interact with any external application or data source.

| Client Call | Space Data Source/ Synchronization Endpoint Call |

Cache Policy Mode | EDS Usage Mode |

|---|---|---|---|

| write , change , take , asyncTake , writeMultiple , takeMultiple , clear | onOperationsBatchSynchronization , afterOperationsBatchSynchronization | ALL_IN_CACHE, LRU | read-write |

| readById | getById | ALL_IN_CACHE, LRU | read-write,read-only |

| readByIds | getDataIteratorByIds | ALL_IN_CACHE, LRU | read-write,read-only |

| read , asyncRead | getDataIterator | LRU | read-write,read-only |

| readMultiple , count | getDataIterator | LRU | read-write,read-only |

| takeMultiple | getDataIterator | ALL_IN_CACHE, LRU | read-write |

| transaction committed | onTransactionSynchronization , afterTransactionSynchronization | ALL_IN_CACHE, LRU | read-write |

| transaction failed | onTransactionConsolidationFailure | ALL_IN_CACHE, LRU | read-write |

XAP’s built in Hibernate Persistency implementation is an extension of SpaceDataSource and SpaceSynchronizationEndpoint classes. For detailed API information see Space Data Source API and Space Synchronization Endpoint API.

RDBMS Space Persistency

GigaSpaces comes with a built-in implementation of SpaceDataSource and SpaceSynchronizationEndpoint called Hibernate Space Persistency. See Space Persistency Initial Load to allow the space to pre-load its data. You can also use splitter data source SpaceDataSourceSplitter that allows you to split data sources according to entry type.

NoSQL DB Space Persistency

The Cassandra Space Persistency Solution allows applications to push the long term data into Cassandra database in an asynchronous manner without impacting the application response time and also load data from the Cassandra database once the GigaSpaces IMDG is started or in a lazy manner once there is a cache miss when reading data from GigaSpaces IMDG.

The GigaSpaces Cassandra Space Peristency Solution leverages the Cassandra CQL, Cassandra JDBC Driver and the Cassandra Hector Library. Every application’s write or take operation against the IMDG is delegated into the Mirror service that is using the Cassandra Mirror implementation to push the changes into the Cassandra database.